Saturday, 19 December 2009

Horse in Motion - Again

Jan Tschichold- Penguin Composition Rules

2010 Update:

I checked with Penguin who still hold copyright and they have given me permission to set them for my own use but not to distribute further.

Wednesday, 16 December 2009

Six Guidelines for Good Typography

A short set of guidelines for Good Typography from the Hand & Eye website - Typeset by Matt in the built in Bookman font using TexNicCenter.

Monday, 14 December 2009

Making Books Beautiful Again

Thursday, 10 December 2009

Sunday, 6 December 2009

Daniel Danger

XKCD's Powers of Ten

"I think". Charles Darwin July 1837.

The Horse in motion - 1878

Here is the set of stills in an animated GIF.

John Ruskin

Visual Observation and the Camera Lucida

Integrated text, drawings and images

Brain imaging skewed?

http://www.nature.com/news/2009/090427/full/4581087a.html

Placentogram

Sports Balls

Himalayan Panoramas

A classic Ed Ricketts List

Ricketts was not fictional. He was a ground breaking marine biologist, ecologist, traveller and philosopher. I would recommend the interested reader to find the recently published collection of his travelogues "Breaking Through" (edited by K.A. Rodger) which has some of the best lists I have come across.

One of the most interesting pieces of writing in "Breaking Through" is a "Verbatim Transciption" of the trip that Ricketts and Steinbeck took to Baja and subsequently retold in "Log from the Sea of Cortez" by Steinbeck. Ricketts recorded things as the trip progressed and Steinbeck later tidied it up for publication. There are some excellent lists but the one below is one of my favourites and describes the blend of human and biological impressions made on Ricketts whilst in the La Paz area on Friday March 22 1940 (this appears on page 151 of Breaking Through).

"The peso is 5-1/2 or 6 to 1 here. I bought swank-looking huaraches for one dollar and one peso (7 pesos) and a fine iguana belt for 2.50 pesos; Epsom salts at a clothing store, Casa Gomez, one peso per kilo. I liked the blonde daughter. The girl in the pharmacy, I found entirely charming. The people are wonderful here. Ice is cinco centavos per kilo; not very good ice, tho. A quarter liter of Carta Blanca beer is 30 centavos per bottle, about 10 pesos per case, with 2.50 peso bottle return. I got 3 cigars from Sr. Gomez from his personal stock for 60 centavos, twisted - not wonderful, but satisfactory - Vera Cruz tobacco.

Borette is the poisonous puffer fish; its liver is said to be so poisonous that people use it to poison cats and flies.

Cornada is the hammerhead shark.

Barco is the red snapper.

Caracol (also Burrol) is the term for snails in general, particularly for the large conch for blowing like a horn.

Erizo is urchins, both kinds.

Abanico is sea fan, gorgonian.

Broma is barnacle.

Hacha is pinna, large clam."

Pareidolia

The extent of Sea Ice at the North Pole

I like the unusual way of looking down at the Earth to the North Pole.

Natural History of Manhattan

This NYT article gives a slideshow about a Natural history of Manhattan over the past 400 years. The new map-based exhibit opened at the Museum of the City of New York. It is called, "Mannahatta/Manhattan: A Natural History of New York City." The exhibit consists of historical accounts, maps and computer models that explore the ecology of Manhattan from the time before it became a city. The project has also its own website HERE and a book. This is a pretty good multi-media site that gives layers of meaning to those who now live in Manhattan.

Tabula Peutingeriana

Wikipedia has a good entry (http://en.wikipedia.org/wiki/Tabula_Peutingeriana) including a high resolution facsimile that can be downloaded.

Micrographia

Caslon Letter Founder 1728

The book itself is here (http://digicoll.library.wisc.edu/HistSciTech/subcollections/CyclopaediaAbout.html).

The image below is one of the first pages of chapter 3, Crenellations. It has some large Bembo italic for the chapter number and title.

Old School Press

This link = http://www.theoldschoolpress.com/osppic/bookfellrevivalinside.htm is to a book published by them called "The story of the revival of the Fell types in the 125 years from 1864" by Martyn Ould and Martyn Thomas, which describes and uses the type punches and matrices designed by John Fell and used by Oxford University Press - these original punches and types were rediscovered at O.U.P. in the the 1860s and this tells the story of how they were used and shows some of the output.

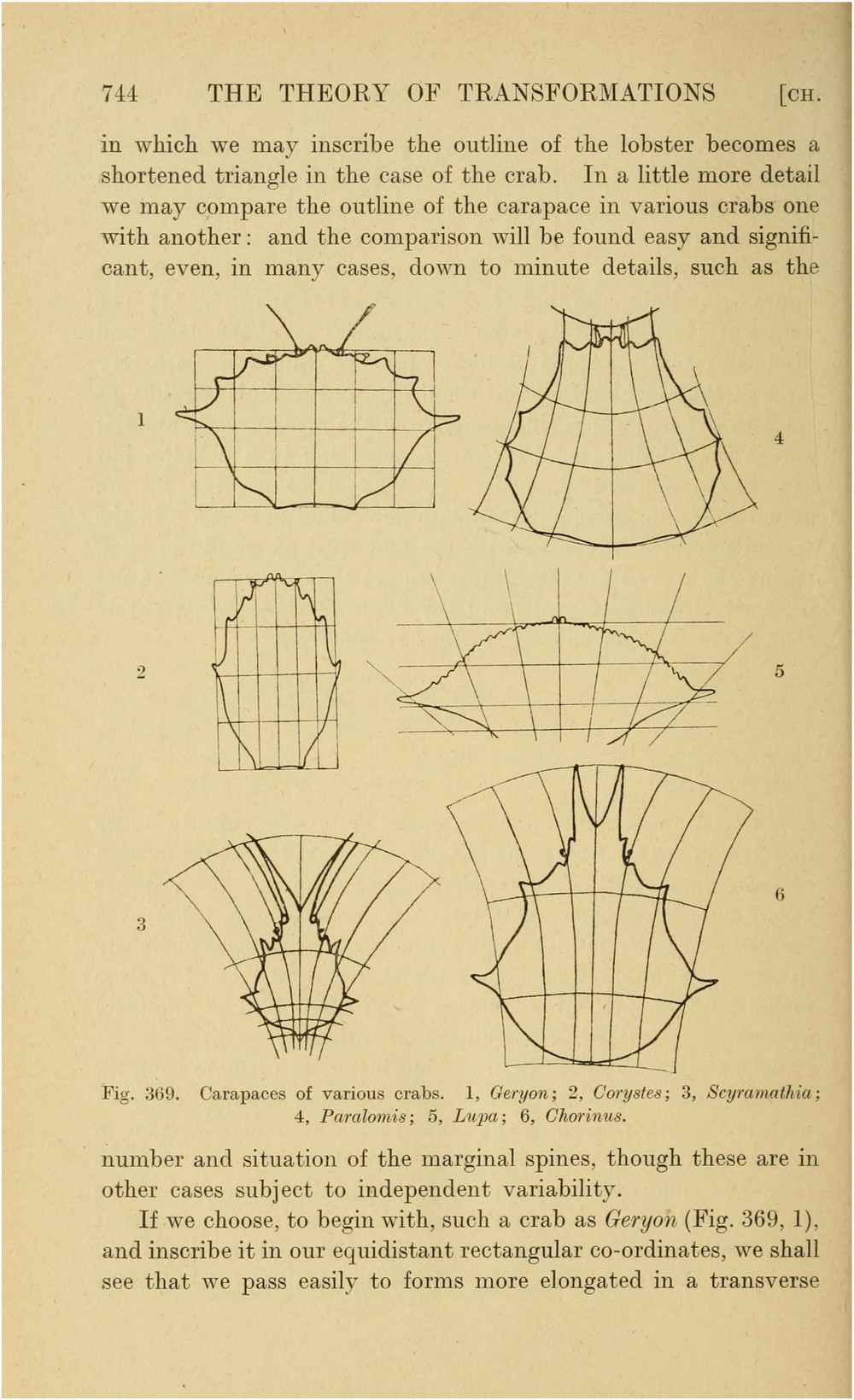

On Growth and Form

One of his best know ideas is that simple physical deformations of complex systems can give rise to whole families of apparently unrealted natural forms. The image shown below is page 744 of the first edition (you can find the whole book in electronic form at the Internet Archive = http://www.archive.org/details/ongrowthform1917thom). It shows how a complex shape representing the 2D shape of a crabs carapace can after simple geometric transformations give rise to a family of different carapace shapes.

Book typesetting with LATEX

The learning curve is reasonable and all the fancy headers, figure numbering, contents etc etc defaults are well chosen. The TEX set up on a PC was easy and free - I use the LED editor and the MikTex 2.7 package.

This is typeset onto B5 paper in PDF format and I have now found a pretty good quality short print run digital printers in the UK (this is not POD which is generally low quality, the shortest print run is 50 copies) to produce final book output. I'll keep you posted how the whole process goes.

The attached JPG is deliberately low resolution - its mainly to show the two page spread.

The elements of typographic style

An example;

Q: In your opinion, what developments or trends in the design industry look dangerous?

Bringhurst: The same developments and trends that look dangerous elsewhere: namely, ignorance and greed. The cult of personality and power, and the religion of money. These diseases are as visible in the typographic world as they are in the world of politics.

and a quote;

"The masters of the art, it seems to me, are those who never stop apprenticing".

Scaling images with well known objects

This also acts as a link to the blog of Matthew Ericson (http://www.ericson.net/content/) the the deputy graphics director at The New York Times, where he manages a department of journalists, artists and programmers who produce the interactive information graphics for nytimes.com, as well as all the graphics for the print newspaper.

He has some nice maps and interactives graphics - his multi-decade analysis of the #1's of Michael Jackson is also interesting (http://www.ericson.net/content/2009/06/the-king-of-pop/)

Spiral mapped images

Here is an extensively mapped image database of Trajans column (http://cheiron.mcmaster.ca/~trajan/index.html). The site maps the continuous spiral layout of the column's artwork with cartoons that have annotations and links to high resolution images. The navigation allows you to move up and down the column and see either individual panels or a whole spiral piece around the column at a particular height. The image below shows the navigation mechanism.

Trajans column is much bigger than I had imagined - the human figures are about 2/3 full size and the inside of the column has a spiral staircase.

Matt

Sunday, 8 November 2009

Unbiased Stereology - Reprint and Sample

Friday, 6 November 2009

Statistics as a Liberal Art

Some quotes;

" I find it hard to think of policy questions, at least in domestic policy, that have no statistical component. The reason is of course that reasoning about data, variation, and chance is a flexible and broadly applicable mode of thinking. That is just what we most often mean by a liberal art."

and

"Here is some empirical evidence that statistical reasoning is a distinct intellectual skill. Nisbett et al. (1987) gave a test of everyday, plain-language, reasoning about data and chance to a group of graduate students from several disciplines at the beginning of their studies and again after

two years. Initial differences among the disciplines were small. Two years of psychology, with statistics required, increased scores by almost 70%, while studying chemistry helped not at all. Law students showed an improvement of around 10%, and medical students slightly more than

20%. The study of chemistry or law may train the mind, but does not strengthen its statistical component."

You can get the full paper Here

Saturday, 18 July 2009

Quantitative Intuition

"One of the great difficulties experienced by people in mastering the quantitative science of electricity, arises from the fact that we do not number an electrical sense among our other senses, and hence we have no intuitive perception of electrical phenomena...an infant has distinct ideas about hot and cold, although it may not be able to put its ideas into words and yet many a student of electricity of mature years has but the haziest notion of the exact meaning of high and low potential, the electrical analogues of hot and cold."

William E. Ayrton, Practical Electricity, 1887, Preface.

Cited in The morals of measurement. Graeme Gooday. Cambridge University Press. 2004

Carlson Curve

"FACTORS DRIVING THE BIOTECH REVOLUTION

The development of powerful laboratory tools is enabling ever more sophisticated measurement of biology at the molecular level. Beyond its own experimental utility, every new measurement technique creates a new mode of interaction with biological systems. Moreover, new measurement techniques can swiftly become means to manipulate biological systems. Estimating the pace of improvement of representative technologies is one way to illustrate the rate at which our ability to interact with and manipulate biological systems is changing."

Here is Figure 1 from the paper.

FIG. 1. On this semi-log plot, DNA synthesis and sequencing productivity are both increasing at least as fast as Moore?s Law (upwards triangles). Each of the remaining points is the amount of DNA that can be processed by one person running multiple machines for one eight hour day, defined by the time required for preprocessing and sample handling on each instrument. Not included in these estimates is the time required for sequence analysis. For comparison, the approximate rate at which a single molecule of E. coli DNA Polymerase III replicates DNA is shown (dashed horizontal line), referenced to an eight-hour day.

Sample processing time and cycle time per run for instruments in production are based on the experience of the scientific staff of the Molecular Sciences Institute and on estimates provided by manufacturers. ABI synthesis and sequencing data and Intel transistor data courtesy of those corporations. Pyrosequencing data courtesy of Mostafa Ronaghi at the Stanford Genome Technology Center. GeneWriter data courtesy of Glen Evans, Egea Biosciences. Projections are based on instruments under development.

McLuhan on TV & Comics asVisual media

"From the three million dots per second on TV, the viewer is able to accept, in an iconic grasp, only a few dozen, seventy or so, from which to shape an image. This image thus made is as crude as that of the comics. It is for this reason that ... the comics provide a useful approach to understanding the TV image, for they offer very little visual information or connected detail."

Understanding Media: The Extensions of Man. New York: McGraw-Hill, 1964 (pg 150).

Wells & Bush on Information

For example, the British science fiction write H.G. Wells imagined in the late 1930’s what a 'World Brain’ would be like and what it would enable (Wells 1938). His idea was that in the future scholars would have access at their desks to the complete catalogue of the Worlds knowledge. Wells imagined that this would be enabled by photographic means - based on the idea of microfilm and microfiche. However, if one replaces talk of micro-fiche with digital data then we can see just how prescient Wells’ vision was;

”our contemporary encyclopedias are still in the coach-and-horse phase of development, rather than in the phase of the automobile and the aeroplane. These observers realize that the modern facilities of transport, radio, photographic reproduction and so forth are rendering practicable a much more fully succinct and accessible assembly of facts and ideas than was ever possible before.”

Wells was not alone, at the end of the second world war the US politician and thinker Vannevar

Bush wrote a piece for Atlantic Magazine in which he reflected on the enormous changes that science and technology had brought in (Bush 1945). He marveled at the fact that;

”There is a growing mountain of research. But there is increased evidence that we are being bogged down today as specialization extends. The investigator is staggered by the findings and conclusions of thousands of other workersconclusions which he cannot find time to grasp, much less to remember, as they appear . . . Professionally our methods of transmitting and reviewing the results of research are generations old and by now are totally inadequate for their purpose.”

These thinkers had enormous foresight. They had begun to observe a significant change in the volume of data and information arising in general, and in scientific and technical work in particular, and realised that there were real issues raised by the deluge of information.

How long is a Petabyte life?

In digital data terms a petabyte is a lot of data. 1 PB = 1,000,000,000,000,000 B = 1015 byte. Assuming a byte is 8 bits then a petabyte is 8 x 1015 bits.

According to this paper, Google processes more than 20 Petabytes of data per day using its MapReduce program.

According to Kevin Kelly of the New York Times, this reference, "the entire works of humankind, from the beginning of recorded history, in all languages" would amount to 50 petabytes of data.

These are all difficult to understand as they are abstract. So I tried to find a way of understanding what a Petabyte is in human terms. Scientific researchers estimate that the human retina communicates with the brain at a rate of 10 million bits per second (Reference HERE) or 106 bits per second. This sounds pretty impressive. How long does it take a human eye-brain system to move a petabyte of data (assuming that you could keep your eyes permanently open so that you are getting your full 10 million bits per second).

By my calculations a year is 3.15 x 107 seconds. This means a total amount of data per year from retina to brain of 3.15 x 1013 bits. Dividing 8 x 1015 by 3.15 x 1013 we get 254 years. This is a long time to keep your eyes open!

If we take a normal human life to be the biblical standard of Psalms 90: The days of our years are threescore years and ten, then a normal human creates about 0.27 petabytes in their life.

So I will define a new unit, the PetaBlife, with a symbol ℘ which is the number of standard human lifetimes required for a human retina to make a PetaByte of data.

If we take Google seriously, then each year they are processing the equivalent of 7.3 x 103 ℘.

Matt

Thursday, 16 July 2009

No evidence that prayer alleviates ill health

In fact this is a great example of an objective and balanced analysis of the evidence for the efficacy of a treatment. Full report HERE.

Intercessory prayer for the alleviation of ill health

Leanne Roberts1, Irshad Ahmed2, Steve Hall3, Andrew Davison4

1Hertford College, Oxford, UK. 2Psychiatry, Capital Region Mental Health Center, Hartford, Connecticut, USA. 3The Deanery, Southampton, UK. 4St Stephen's House, Oxford, UK

Contact address: Leanne Roberts, Hertford College, Catte Street, Oxford, OX1 3BW, UK. leanne.roberts@hertford.ox.ac.uk. (Editorial group: Cochrane Schizophrenia Group.)

Cochrane Database of Systematic Reviews, Issue 3, 2009 (Status in this issue: Edited, commented)

Copyright © 2009 The Cochrane Collaboration. Published by John Wiley & Sons, Ltd.

DOI: 10.1002/14651858.CD000368.pub3

This version first published online: 15 April 2009 in Issue 2, 2009. Re-published online with edits: 8 July 2009 in Issue 3, 2009. Last assessed as up-to-date: 13 November 2008. (Help document - Dates and Statuses explained).

This record should be cited as: Roberts L, Ahmed I, Hall S, Davison A. Intercessory prayer for the alleviation of ill health. Cochrane Database of Systematic Reviews 2009, Issue 2. Art. No.: CD000368. DOI: 10.1002/14651858.CD000368.pub3.

Plain language summary

Intecessory Prayer for the alleviation of ill health

Intercessory prayer is a very common intervention, used with the intention of alleviating illness and promoting good health. It is practised by many faiths and involves a person or group setting time aside to petition God (or a god) on behalf of another who is in some kind of need, often with the use of traditional devotional practices. Intercessory prayer is organised, regular, and committed. This review looks at the evidence from randomised controlled trials to assess the effects of intercessory prayer. We found 10 studies, in which more than 7000 participants were randomly allocated to either be prayed for, or not. Most of the studies show no significant differences in the health related outcomes of patients who were allocated to be prayed for and those who allocated to the other group.

Wednesday, 15 July 2009

Fact Checking

Matt

Saturday, 4 July 2009

R - the de facto Stats standard?

Here is an item on the R language in the The New York Times , highlighting the spread of R as the package of choice for academics and commercial users. And a related NYT blog item is here. One quote is interesting - "Intel Capital has placed the number of R users at 1 million".

Friday, 3 July 2009

Data Mining

New Zealand Journal of Science 62 (2005): 126-128.

Matt

Monday, 29 June 2009

Digitising is NOT measuring

Digitising is the capture of digital data from a sensor. It is a new and low cost way of generating petabytes of data. However, simply taking an analogue input and transforming it to a digital image or signal does not mean it is a measurement.

There are millions and millions of digital images captured every day (perhaps billions). The vast majority of these are NOT measurements they are 'snapshots'. In order to use digital imagery as a measurement modality for science one needs to take care of magnification issues (not always equal in X-Y), linearity of grey scale response and/or colour response, bit depth, illumination set up to highlight features of interest, effect of image compression, frequency response of lenses used etc etc.

Matt

Saturday, 27 June 2009

Science Studies the Sardine (Ed Ricketts 1947)

Unfortunately for the sardine, and the extended marine food chain that it forms part of, Ricketts was killed in May 1948 and his deep ecological insight and campaigning capability never helped save the sardine. Much later, in 1998, well known fisheries ecologist Dr Daniel Pauly published a seminal paper in Science called 'Fishing Down Marine Food Webs'. This is both a citation classic (with over 1,000 citations) and a highly influential paper. Pauly was not aware of Ricketts work when his paper was published.

Science Studies the Sardine

Mysterious Disappearance Focuses Attention on Woeful Lack of Information Regarding Billion Dollar Fish

By EDWARD F. RICKETTS, Pacific Biological Laboratories

The Herald has undoubtedly reported waterfront opinion correctly in stating that the shortage of sardines is being attributed to a change in the currents. I doubt very much if we can rely on such a simple explanation. I am reminded instead of an old nursery rhyme. Like the "farmer-in-the-Dell" there's a long chain of events involved. And you have to be familiar with all of them in order to know any one very clearly.

This is complicated still further by the fact that scientists haven't yet unravelled it all completely. But already quite a lot of information is available, and from most of the parts we can piece together some sort of picture puzzle. The result may be a labyrinth, but there's no way to avoid it. We just have to be patient and try to follow it through. Because I've done this myself after a fashion, perhaps I can be better than no guide at all.

By means of a very efficient straining apparatus in the gills, sardines are able to feed directly on the most primitive foodstuffs in the ocean. This so-called "plankton," chiefly diatoms (free floating microscopic plants) is the product of oceanic pastures, and like grain and grass and root crops, bears a direct relation to sunlight and fertilizer. Except that in the oceans of course you don't have to depend on the rains for moisture.

Some of these ocean pastures produce more per acre than the others. This is due to variations in the amount of fertilizer brought to the surface by a process called "upwelling."

DUST TO DUST

Only the upper layers of water receive enough light to permit the development of plants. In most places, when the sunlight starts to increase in spring, these plants grow so rapidly that they deplete the fertilizer and die out as a result. In the familiar pattern of dust to dust and ashes to ashes, their bodies disintegrate and relase the chemical elements. These, in the form of a rain of particles, contantly enrich the dark-deeper layers.

On the California coast - one of the few places in the world, incidentally, where this happens - winds from the land blow the surface waters of the shore far out to sea. To replace these waters, vertical currents are formed which bring up cool, fertilizer laden waters from the depths to enrich the surface layers, maintaining a high standard of fertility during the critical summer months. Since the seeds of the minute plants are everywhere ready and waiting to take advantage of favorable conditions, these waters are always blooming. It's a biological rule that where there's food, there's likely to be animals to make use off it. Foremost among such animals is the lowly sardine.

FISH "FACTORY"

In the long chain, this is the first link. Reproductive peculiarities of the sardine itself provide the second. Above their immediate needs, adult sardines store up food in the form of fat. During the breeding season, ALL this fat is converted into eggs and sperm. The adult sardine is nothing but a factory for sexual products.

The production of plankton is known to vary enormously from year to year. If feeding conditions are good, the sardines will all be fat, and each will produce great quantities of eggs. If the usual small percentage of eggs survive the normal hazards of their enemies, a very large year class will be hatched.

ABUNDANT ENEMIES

If then feeding conditions are still good at the time and place of hatching (spring in southern California and Northern Mexico), huge quantities of young sardines will be strewn along the Mexican coast by south-flowing currents. Here their enemies are also abundant. Including man - chiefly in the form of bait fishermen from the tuna clippers. Those surviving this hazard move back into California waters, extending each year further and furher up the coast, the oldest finally migrating each summer clear up to Vancouver Island. All return during the winter to the breeding ground. This process builds up until a balance is established. Or until man, concluding that the supply is in fact endless, builds too many reduction plants on that assumption.

On the other had, the small year classes resulting from decreased plankton production, will in themselves deplete the total sardine population. If then the enemies-again chiefly man - try desperately to take the usual amounts, by means for instance of more and larger boats, greater cruising radius, increased fishing skills, etc, sardine resources can be reduced to the danger point.

FORECAST FEASIBLE

All this being true, we might be able to forecast the year classes if we could get to know something about the conditions to which the adults are subjected at a given time. Their eggs are produced only from stored fat. The fat comes only from plankton. And there are ways of estimating the amount of plankton produced.

An investigator at the Scripps Institution of Oceanography long ago realized the fundmental importance of plankton. He has been counting the number of cells in daily water samples for more than 20 years. This scientist, now retiring perhaps discouraged because the university recently hasn't seen fit even to publish his not-very-spectacular figures, ought to be better known and more honored. He deals with the information of prime importance to the fisheries. But in order to get this information in sufficient detail, you have to know the man, and get him to write you about it personally.

FIGURES NEEDED

Figures from only a single point have very limited value. We should have data of this sort for a number of places up and down the coast so as to equalize the variations; and for many years. But we're lucky to have them even for La Jolla. The graph shows 1926, 1931, 1934 to have been poor years, but during those times the total sardine

landings were still apparently well within the margin of safety. Years 1941-42 were also poor. But during this time the fishery was bringing in really large quantities. The evidence is that very lean adults resulted from these lean years, producing few eggs. And we would have done well take only a few of them and their progeny. A glance at the chart labelled "California Sardine Landings" will show what actually happened instead.

landings were still apparently well within the margin of safety. Years 1941-42 were also poor. But during this time the fishery was bringing in really large quantities. The evidence is that very lean adults resulted from these lean years, producing few eggs. And we would have done well take only a few of them and their progeny. A glance at the chart labelled "California Sardine Landings" will show what actually happened instead.There's still another index of sardine production. This time the cat of the wife of the farmer-in-the-dell catches herself a fine succulent mouse.

TEMPERATURE KNOWN

We know that the food of the sardine depends directly on fertility. And that fertility depends on upwelling. Obviously, the water recently brought up from depths is colder than at the surface. When upwelling is active, surface temperatures will be low. Now we can very easily calculate the mean annual sea water temperatures from the readings taken daily at Hopkins Marine Station

and elsewhere. The chart which has been prepared to show these figures bears out again the fact that 1941 and'42 must have been unfortunate years for the sardines. Following those years we should have taken fewer so as not to deplete the breeding stock.

and elsewhere. The chart which has been prepared to show these figures bears out again the fact that 1941 and'42 must have been unfortunate years for the sardines. Following those years we should have taken fewer so as not to deplete the breeding stock.FISHING Vs. FISH

Instead, each year the number of canneries increased. Each year we expended more fishing energy pursuing fewer fish. Until in the 1944-46 seasons we reached the peak of effort, but with fewer and fewer results. Each year we've been digging a little further into the breeding stock.

A study of the tonnage chart will show all this, and more. The total figures, including Canada, tell us pretty plainly that the initial damage was done in 1936-37, when the offshore reduction plants were being operated beyond regulation. And the subsequent needs of the war years made it difficult then also for us to heed the warnings of the scientists of the Fish and Game Division.

THE ANSWER

The answer to the question "Where are the sardines?, becomes quite obvious in this light. They're in the cans! The parents of the sardines we need so badly now were being ground up then into fish meal, were extracted for oil, were being canned; too many of them, far too many.

But the same line of reasoning shows that even the present small breeding stock, given a decent break, will stage a slow comeback. This year's figures from San Pedro, however, indicate no such decent break.

During this time of low population pressure, the migrating few started late, went not far, and came back earlier than usual. Actually we had our winter run during August and September when the fishermen were striking for higher rates. By the time we had put our local house back in order, the fish had gone on south. Many had failed to migrate in the first place, and were milling around their birthplace in the crowded Southern California waters. And the San Pedro fleet, augmented by out-of-work boats from the northern ports, has been making further serious inroads into the already depleted breeding stock.

WE WILL FORGET

My own personal belief is nevertheless optimistic. Next fall I expect to see the fish arrive, early again, and in somewhat greater quantity. But a really good year will be an evil thing for the industry. And still worse for Monterey. Because we'll forget our fears of the moment, queer misguided mortals that we are! We'll disregard conservation proposals as we have in the past; we'll sabotage those already enacted. And the next time this happens we'll be really sunk. Monterey will have lost its chief industry. And this time for good!

If on the other hand, next year is moderately bad - not as bad as this year, Heaven forbid, but let's hope it's decently bad! - maybe we'll go along with such conservation measures as will have been suggested by a Fish and Game Commission which in the past has shown itself far, far too deferent to the wishes of the operators - or maybe to their lobby!

SWEDEN'S EXAMPLE

We have before us our own depleting forests. We have Sweden's example; now producing more forest products every year than it did a hundred years back when the promise of depletion forced the adoption of conservation measures. We have this year's report on our own halibut landings, like the old days - the result solely of conservation instituted by a United States-Canadian commission which took over in the face of obvious depletion.

If we be hoggish, if we fail to cooperate in working this thing out, Monterey COULD go the way of Nootka, Fort Ross, Notley's Landing, or communities in the Mother Lode, ghost towns that faded when the sea otter or lumber or the gold mining failed. If we'll harvest each year only that year's fair proportion (and it'll take probably an international commission to implement such a plan!) there's no reason why we shouldn't go on indefinitely profiting by this effortless production of sea and sun and fertilizer. The farmer in the dell can go on with his harvesting.

This text from material reprinted for educational purposes by Roy van de Hoek, in its entirety from the newspaper:

MONTEREY PENINSULA HERALD 12th Annual Sardine Edition, p.1,3 March 7, 1947.

Thursday, 18 June 2009

"Fact free science"

"I discuss below a particular example of a dynamic system 'Turing's morphogenetic waves' which gives rise to just the kind of structure that, as a biologist, I want to see. But first I must explain why I have a general feeling of unease when contemplating complex systems dynamics. Its devotees are practicing fact-free science. A fact for them is, at best, the output of a computer simulation: it is rarely a fact about the world.

John Maynard Smith, "Life at the Edge of Chaos?," The New York Review of Books, March 2, 1995

Tuesday, 16 June 2009

Microscopy Site

The humble light microscope is an icon of research science. It has a practical 'resolution' that on a good day almost achieves the theoretical resolution that was calculated by Abbe in the 1880's. HERE is a great and very large website dedicated to microscopy. Enjoy.

Ancient Geometry, Stereology & Modern Medics

This is a paper HERE that Vyvyan Howard and I had fun writing a few years back and published in Chance magazine. It's a popular science type article on “Ancient Geometry, Stereology & Modern Medics”, that was originally written in 2002 and which I have recently tidied up into a PDF. It links ancient ideas in geometry with random sampling and state-of-the-art scientific imaging.

One of the key characters is a famous mathematician Zu Gengzhi, who had a famous mathematical Father Zu Chongzhi (in the picture).

Zu Gengzhi was the first to describe what we now refer to as Cavalieri's theorem;

"The volumes of two solids of the same height are equal if the areas of the plane sections at equal heights are the same."

The translation by Wagner (HERE) is given as

If blocks are piled up to form volumes,

And corresponding areas are equal,

Then the volumes cannot be unequal.

PhenoQuant™

Inspired by a 2004 paper by Vyvyan Howard et al (HERE) I have been trying to develop a business model whereby high quality quantification of the phenotypic impact of specific gene knock-outs in well defined strains of transgenic mice is provided as a service.

The quantification is achieved with protocolised stereological techniques, and clients get the specific data they need on time and in full. At the same time the parent business builds a proprietary database that can be data-mined to provide new insights and discoveries.

I worked with a Masters Student, James Stone, at the Manchester Science Enterprise Centre in 2007 to develop the concept and try to understand the market positioning for such a business, tentatively called PhenoQuant™. James and I did some outline work on a business plan and he explored routes for funding the opportunity. The plan is not mature and it is currently dormant but this is a real opportunity to bring quantification to one key aspect of modern Gene based biomedical research work.

Monday, 15 June 2009

The Art of the Soluble

Galileo the quantifier

Galileo during his period in Padua before moving to Florence in 1610.

Cited in; The science of measurement: A historical survey. Herbert Arthur Klein. Dover 1988. p509.

The Data Deluge - 1987 Style

This article describes how mad baseball fans can get "...the ultimate service for the most avid baseball addict: daily, detailed reports on 16 minor leagues encompassing 154 teams and more than 3,000 players". This data deluge is made possible by facsimile machines installed in more than 150 minor league ball parks.

'Official scorers or a club official are supposed to file the information within an hour after the game and then the phone-line cost to the team is minimal since the fax transmissions take about 40 seconds each.